As artificial intelligence (AI) becomes more integral to our lives, it’s crucial to consider how it’s developed. AI is transforming sectors like healthcare, finance, and recruitment, but if we’re not careful, we risk creating biased systems that lead to unfair treatment and privacy invasions.

This is where ethical AI comes into play. By prioritizing transparency, fairness, and accountability, we can minimize bias and foster trust. Without these principles, AI might reinforce societal inequalities. It’s vital for developers, businesses, and policymakers to collaborate in promoting responsible innovation.

In this article, we’ll discuss key principles and best practices for building ethical AI. By adhering to global regulations, we can ensure AI positively impacts society. Let’s explore how we can shape a better future with AI!

Why Ethical AI Development Matters

AI is transforming industries, from healthcare to finance, influencing critical decisions that impact people’s lives. However, AI can reinforce biases, spread misinformation, and create unfair outcomes without ethical safeguards. Implementing ethical AI development best practices ensures fairness, accountability, and trust in AI-driven systems.

Impact on Society: How AI Influences Real-World Decisions

AI is integrated into hiring processes, lending decisions, medical diagnoses, and law enforcement. A well-developed AI can improve efficiency and accuracy, but biased AI can lead to discrimination, such as denying loans to specific demographics or misidentifying individuals in facial recognition systems. Ensuring AI ethics helps create technology that benefits society rather than harming it.

The Risks of Unethical AI: Examples of AI Bias, Discrimination, and Misinformation

AI models learn from historical data; if that data contains biases, the AI can amplify them. For example, Amazon’s AI hiring tool was scrapped after it showed bias against female candidates. Similarly, some AI-driven healthcare models have prioritized white patients over Black patients due to skewed training data. Unchecked AI can also spread misinformation through deepfakes and biased content recommendations, negatively influencing public opinion.

Trust and Compliance: How Ethical AI Builds Public Trust and Aligns with Global Regulations

Consumers and businesses are becoming more aware of AI ethics, leading to increased demand for transparency and fairness. Governments worldwide are introducing AI regulations, such as the EU AI Act, to prevent harmful AI practices. Ethical AI development helps companies comply with legal standards and fosters trust among users, ensuring long-term success and credibility in AI-driven solutions.

Key Principles of Ethical AI Development

To ensure responsible and trustworthy AI, developers must follow core ethical principles. These include fairness, transparency, accountability, and data privacy. Without these safeguards, AI can reinforce biases, make opaque decisions, and pose risks to users. By incorporating ethical AI development best practices, businesses can create AI systems that are fair, explainable, and secure.

Fairness & Bias Mitigation – Ensuring AI Does Not Reinforce Discrimination

AI models must be trained on diverse and representative datasets to avoid biases. If AI learns from historically biased data, it can perpetuate hiring, lending, and law enforcement discrimination. Techniques like algorithmic fairness testing and bias audits help identify and correct unfair patterns. Ensuring fairness in AI prevents discrimination based on race, gender, or socioeconomic status.

Transparency & Explainability – Making AI Decision-Making Understandable

AI systems should be explainable, meaning users can understand how decisions are made. Black-box AI models, where decision-making is unclear, reduce trust and accountability. Implementing explainable AI (XAI) techniques, such as model interpretability tools, ensures users and regulators can audit and trust AI-driven processes (Towards Data Science).

Accountability & Human Oversight – Keeping Humans Involved in AI-Driven Decisions

AI should not operate independently without human supervision, especially in high-stakes areas like healthcare and criminal justice. Human oversight ensures AI decisions align with ethical standards and legal requirements. Organizations must establish clear accountability structures, ensuring AI developers and users are responsible for ethical AI implementation.

Privacy & Data Security – Protecting User Information from Misuse

AI uses a lot of data, making privacy and security very important. Companies need to have strong data protection policies. This includes using encryption, anonymizing data, and following laws like GDPR and CCPA. Ethical AI practices help ensure that personal information is not misused. This reduces the chances of data breaches and abuse of surveillance.

Case Study: Apple Card and Gender Bias

In 2019, Apple’s AI-powered credit card system, Apple Card, faced scrutiny for alleged gender bias. Reports revealed that female applicants received significantly lower credit limits than their male counterparts, even with similar financial profiles. Tech entrepreneur David Heinemeier Hansson highlighted that his wife, despite a higher credit score, was assigned a credit limit 20 times lower than his. This led to the New York State Department of Financial Services (DFS Report) investigation. The case underscores the need for transparency, fairness audits, and diverse training data in AI-driven financial systems

Best Practices for Ethical AI Development

Building ethical AI needs careful planning and action to avoid bias, improve transparency, and ensure accountability. Here are some best practices for creating responsible AI systems that focus on fairness, trust, and human oversight.

4.1 Use Diverse and Representative Datasets

One big reason for unfairness in AI is using data that isn’t fair. If AI learns from data that doesn’t show all kinds of people, it can treat some people badly. To make fair AI, we need to use data that includes different races and genders. Before making AI, developers should check their data to make sure it’s balanced.

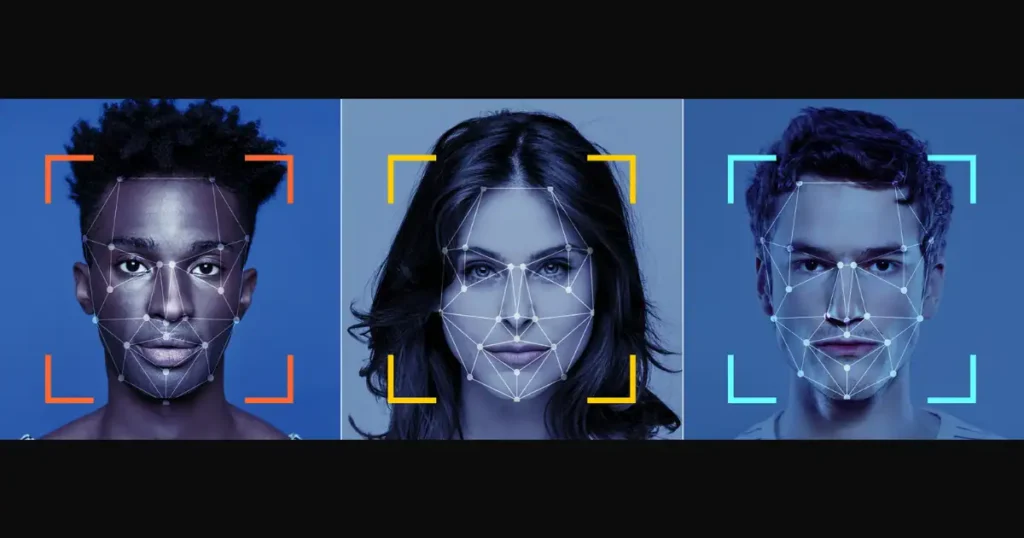

Case Study: Bias in Facial Recognition Technology

Several studies have shown that facial recognition AI struggles with accuracy for people of color due to biased training data. For example, a 2018 MIT study found that commercial facial recognition systems had higher error rates for darker-skinned individuals, leading to wrongful arrests and discrimination (MIT Sloan). This highlights the need for diverse datasets to improve AI accuracy and fairness.”

4.2 Implement AI Fairness Testing

AI fairness testing is crucial to detecting and mitigating machine learning model bias. Developers should use bias detection tools such as IBM AI Fairness 360 and Google’s What-If Tool to analyze how AI makes decisions.

- Counterfactual testing involves altering variables like gender or race to see if the AI’s decision changes. This helps identify and correct biases in AI predictions.

- Regular auditing ensures AI models remain fair over time, preventing unintended bias from creeping in after deployment.

Organizations can improve AI reliability and prevent discriminatory outcomes by implementing fairness testing.

4.3 Ensure Transparency in AI Models

AI decision-making should be explainable and understandable to users, regulators, and stakeholders. Explainable AI (XAI) techniques allow users to understand why an AI made a specific decision.

- Transparency tools such as SHAP (Shapley Additive Explanations) and LIME (Local Interpretable Model-agnostic Explanations) help break down AI predictions into human-readable insights.

- Businesses can improve trust by adopting open AI policies that disclose how AI models function and how decisions are made.

Without transparency, AI risks becoming a “black box” where decisions lack accountability and oversight.

4.4 Maintain Human Oversight in AI Decision-Making

AI should not operate without human intervention, especially in critical areas like healthcare, hiring, and criminal justice. Human-in-the-loop (HITL) systems allow experts to review and validate AI decisions before they affect people’s lives.

Example: The Impact of AI Errors in Medical Diagnoses

In healthcare, advanced AI diagnostic tools assist doctors in identifying various diseases and health conditions. These tools analyze patient data to offer insights and highlight potential issues. However, research indicates that AI can make errors in diagnosis if the information it relies on is biased or incomplete, such as outdated medical records.

If doctors do not carefully review the AI’s findings, it could result in incorrect treatments and serious health risks for patients. By involving medical professionals in the AI diagnosis process, we can ensure that the information is accurate and prioritize the safety and well-being of patients.

Challenges in Ethical AI Development

Developing ethical AI comes with significant challenges, as technology evolves faster than regulations, and biases often remain hidden within AI models. Addressing these challenges requires a collaborative effort from governments, businesses, and researchers to ensure AI is fair, transparent, and accountable.

5.1 Rapid AI Advancements vs. Slow Regulations

AI technology is advancing more quickly than ever, but legal guidelines are having a tough time keeping up. Many governments are still working on developing regulations for AI, which means companies often have to set their own rules. This can sometimes lead to ethical concerns. Without the right oversight, AI systems might make biased or harmful decisions before laws are in place to protect us. It’s important to strike a balance between fostering innovation and making sure AI is used responsibly!

5.2 Lack of Global AI Standards and Ethical Alignment

Countries have varying approaches to AI ethics, creating inconsistencies in global AI regulations. For example, the EU AI Act enforces strict AI risk classifications, while the U.S. focuses on industry-led guidelines. This lack of alignment makes it difficult for multinational companies to comply with ethical standards across different regions. Universal AI ethics principles can help create a more standardized and accountable AI landscape.

5.3 Difficulty in Identifying Hidden Biases in AI Models

AI models learn from vast datasets, but biases often remain undetected until the system is deployed. Hidden biases can emerge from historical inequalities, incomplete data, or flawed algorithms, leading to unfair outcomes. Traditional testing methods may not always reveal these biases, so implementing fairness audits, bias detection tools, and diverse testing teams to identify and mitigate bias before deployment is crucial.

5.4 Solutions: Collaboration Between Tech Companies, Researchers, and Policymakers

Solving AI ethics challenges requires joint efforts from multiple stakeholders:

- Tech Companies must adopt fairness audits, transparent AI policies, and ethical AI training.

- Researchers should develop new bias detection tools and frameworks to enhance fairness.

- Policymakers must create and enforce ethical AI regulations that keep pace with technological advancements.

Conclusion

Ethical AI development is really important, not just for technology but also for our society as a whole. As AI continues to shape different industries and impact important decisions, we need to focus on fairness, transparency, accountability, and privacy. When AI systems have biases, it can lead to discrimination, erode trust, and cause harm—so it’s essential for businesses, researchers, and policymakers to make ethical standards a priority.

By using diverse datasets, testing for fairness, having human oversight, and supporting global AI regulations, we can create AI systems that benefit everyone. The future of AI is all about responsible innovation, and by committing to ethical practices, we can help build a fairer and more inclusive digital world for all.

Frequently Asked Questions (FAQs)

1. What is ethical AI and why is it important?

Ethical AI refers to the development and use of artificial intelligence in a way that aligns with human values such as fairness, accountability, privacy, and transparency. It’s important because unethical AI can lead to discrimination, biased decisions, privacy violations, and a loss of public trust in technology.

2. How can bias enter an AI system?

Bias often enters AI systems through the data used to train models. If the data reflects historical or societal inequalities—such as gender, racial, or economic bias—then the AI may learn and replicate those patterns in its decisions. It’s critical to use diverse, representative datasets and conduct fairness testing to detect and reduce these biases.

3. What are some real-world examples of unethical AI?

Examples include:

- Amazon’s AI recruiting tool that downgraded female applicants.

- Facial recognition systems misidentifying people of color at higher rates.

- The Apple Card controversy, where women received lower credit limits.

These cases show how unchecked AI can lead to unfair treatment and regulatory scrutiny.

4. What role does explainability play in ethical AI?

Explainability ensures that AI decisions are understandable to users, regulators, and stakeholders. This builds trust, makes systems auditable, and helps identify unfair or incorrect outcomes. Tools like SHAP and LIME are commonly used for model explainability.

5. How can companies ensure their AI systems are ethical?

Companies can:

- Use diverse datasets.

- Conduct regular bias and fairness audits.

- Maintain human oversight in decision-making.

- Follow local and global regulations like the EU AI Act.

- Be transparent about how their AI systems work.

6. Are there laws that govern ethical AI development?

Yes, many countries are introducing regulations:

- The EU AI Act classifies AI by risk level and sets strict rules.

- GDPR enforces data privacy in AI systems.

- The U.S. is developing industry-led guidelines.

More global cooperation is needed to standardize ethical AI practices.